Artificial Intelligence: Are machines improving our lives?

In this series of blogs, we turn our attention to the increasing role of artificial intelligence (AI) in the medical and automotive industries, but first in this initial part of the blog we look at some of the key challenges in implementing safe and reliable AI.

There are an increasing number of products using either machine learning or artificial intelligence techniques, across the world. However machine learning has been around for decades, so this is not such a new topic. One key question is why would you use AI as opposed to relying on a human being to carry out the activity? The answer can be addressed when considering the usability technique of function analysis. We can assess which activities are best undertaken by a person and which ones lend themselves better to technology.

| Human | Machine |

| Endurance: fatigues easily | Does not fatigue easily |

| Significant time needed for decision-making and movement | High speed |

| Unreliable, makes constant and variable errors | Great accuracy attainable |

| Limited short-term working memory | Excellent for repetitive work |

| Visual information processing system extremely logical and flexible | Needs to be monitored |

| Perception: Ability to make order out of complex situations | Decision-making limited |

| Can reason inductively, can follow up intuition | Inductive reasoning not possible |

Table 1: Function Analysis

From this sort of analysis of human and machine strengths and weaknesses we can pinpoint the areas where AI is best deployed.

AI is basically defined in two rough groups:

The challenges for a continuous learning model being significantly greater than those of a locked model, as the outputs are continually evolving.

Locked models do not change the algorithm nor the output automatically, however an adaptive model continually updates both the algorithm and the output in response to identified improvements.

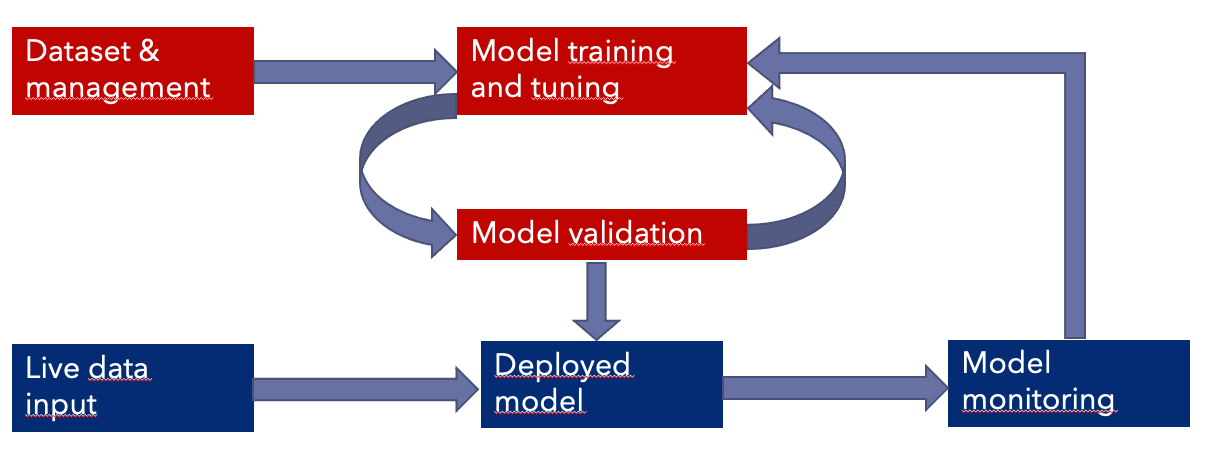

A dataset is fed into the model as indicated in Figure 1, this dataset is then used to validate the model during the development phase. Prior to it being deployed in the product, like all good systems, post deployment feedback is used to further train and improve the model. However, the dataset needs to be representative of the live data input.

Figure 1: AI Model Process Flow

Ultimately the quality of the output in either type of model is going to be dependent on the quality of the dataset used to train it.

In AI, inference is the process of generating conclusions from evidence and facts. One of the biggest challenges is ensuring the dataset being fed into the AI model is free from bias and is representative of the products intended use. One of the largest risk analysis challenges is to ensure the dataset is bias free. Again, as in Table 1 we look at world of usability to help define this approach. Understanding the demographics for the dataset is key and ensuring that the deviation from the optimal dataset can be estimated in terms of the model and algorithm performance. There have been numerous examples of AI making incorrect decisions based on the training with an inappropriate dataset and we shall look at some of these examples in the next two parts of this blog.

There are many international organisations working on AI guidance. At the moment draft standards such as ISO/IEC AWI TR 5469 Artificial Intelligence – Functional safety and AI systems and ISO/IEC CD 23894 Artificial Intelligence Risk Management, are both particularly relevant to our work as a consultancy. The Association for Advancement of Medical Instrumentation (AAMI) and the Food and Drug Administration (FDA) have recently published literature on the subject.

In the subsequent parts of this blog series, we shall look at some of the applications being implemented both in the automotive and medical device sectors and the advantages this technology can bring.

By Alastair Walker, Consultant

Do you want to learn more about the implementation of ISO/SAE 21434, AAMI TIR57 or any other standard in the Medical Device or Automotive sector? We work remotely with you. Please contact us at info@lorit-consultancy.com for bespoke consultancy or join one of our upcoming online courses.