Beyond Hardware Faults: Traditional Safety Tools Don’t Get Software

In safety, what worked for wires and circuits doesn’t always work for lines of code – analyzing software is a whole different challenge compared to hardware or physical systems. Traditional methods like Functional Hazard Assessment (FHA), Failure Modes and Effects Analysis (FMEA), and Fault Tree Analysis (FTA) (for aviation purposes described and prescribed by ARP 4761) have proven successful for spotting physical component failures. But when it comes to software, it’s like trying to fix a broken code with a wrench- not the right fit for decoding the quirks and complexities of software behaviour.

Hardware tends to misbehave for the classic reasons: due to component degradation, environmental stress, manufacturing defects or just plain bad luck. Examples include a sensor that stops sending signals, a chip that overheats, or a wire that frays. Such failures are typically random in nature, but can be predicted with statistics – like reading the future through failure rates.

Software, on the other hand, does not experience physical degradation. It executes exactly as specified by its code, which is both its strength and its weakness. When software “fails,” it’s not random, it’s because of systematic faults, such as incorrect or incomplete requirements, design mistakes or implementation bugs, unexpected interactions between software components.

Note that systematic faults are not exclusive to software; hardware can also suffer from design or manufacturing defects. In software, however, faults are inherently systematic.

Quite a tricky part of software safety is just how many things software can do, or think it should do. The challenge arises from the potentially large state space of software systems. Its behavior depends on multiple factors, such as input data values, internal system states, execution timing, or interactions with other system components.

Pulling all of this together leads to a combinatorial explosion of possible execution paths. As a result, applying methods such as FMEA or FTA directly to software becomes a dizzying task. It might be technically possible, but it is overwhelmingly complicated and difficult to manage due to the huge number of potential conditions that must be considered.

Nataša Simanić John, Functional Safety Consultant

Working on safety-critical software systems? Contact our experts to learn how to apply effective safety and development assurance practices in your projects.

Learn moreMethods like FMEA rely on identifying specific and predictable component failure modes, such as “stuck open,” “short circuit,” or “loss of signal.” These failure modes are tangible and associated with physical components whose behaviours under failure conditions are well understood.

Software, however, does not fail in such obvious ways, exhibiting discrete failure modes. Instead, software errors are more like logic puzzles gone wrong and they may manifest as incorrect calculations or logical conditions, improper timing or sequencing, unexpected responses to certain inputs.

Since the complex logic and multiple interacting states dictate the software behavior, it is difficult to enumerate all possible “failure modes” in the same way as hardware components. It is almost like trying to predict all the ways a chess game might go wrong before the first move.

Besides qualitative approaches, safety assessment methods such as FTA also employ probabilities to quantify system reliability. This works well for hardware components, as they do typically have measurable failure rates (e.g., failures per flight hour) derived from historical data or reliability testing. You can measure, predict, and plan for them.

Software, on the other hand, does not have a meaningful probabilistic failure rate. If a software defect/bug exists, the problem will pop up deterministically, every time when the triggering condition is met. In other words, in terms of failure probabilities, software is digital by nature. If a bug exists, it will trigger under right conditions with probability 1; if no bug exists, it won’t ever trigger, so the probability is 0.

Because of all the discussed limitations, addressing software safety requires taking a different approach. Rather than looking into analysis with probabilistic quantities and failure modes, aviation safety standards are handling it through process assurance.

The primary standard governing airborne software development is RTCA‘s Software Considerations in Airborne Systems and Equipment Certifications, widely known as DO-178C. This rulebook provides a “recipe” of sorts for airborne software, that makes sure the code is produced with a level of confidence compliant to airworthiness requirements, and that it behaves exactly as it should, every single time.

To support safety, DO 178C employs rigorous processes, addressing:

System aspects relating to software development

Software lifecycle

Software planning

Software development – requirements, design, coding and integration

Integral processes – software verification, configuration management, quality assurance, certification liaison.

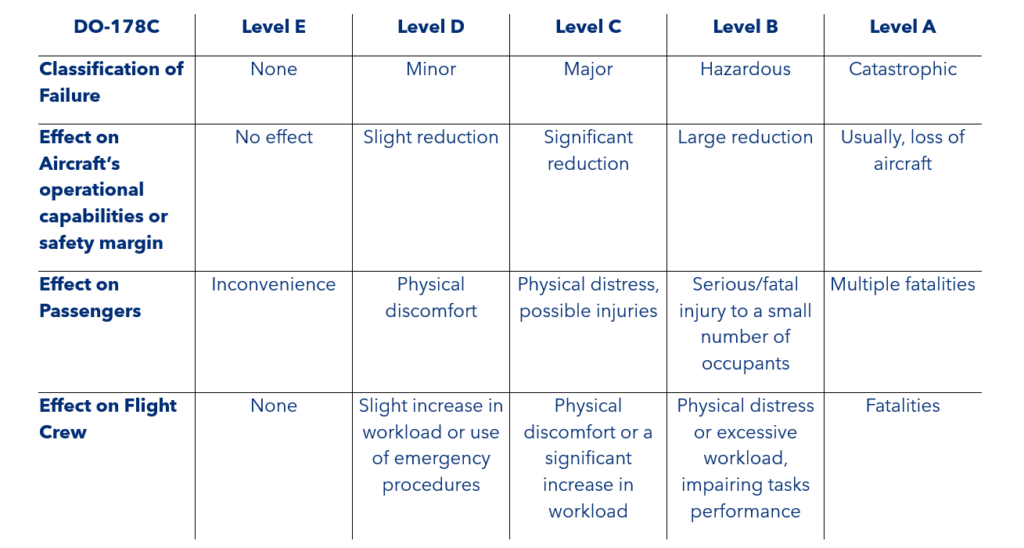

The level of rigor applied depends on the assigned software level, or Item Design Assurance Level (IDAL), which is determined based on the severity of potential system hazards/failures, identified during system-level safety assessments. DAL A gets the most scrutiny and it is reserved for the hazards that could put lives at risk, like autopilot software. DAL E is for software that has no safety relevance at all, so the process is much simpler.

By Nataša Simanić John, Functional Safety Consultant

Want to deepen your understanding of software safety and aviation certification? Contact us for bespoke consultancy and learn how standards such as DO-178C are applied in practice.