ASIL – Independence Day?

First we need to establish what ASIL actually means. ASIL is an acronym that stands for Automotive Safety Integrity Level and is used for classification in the standard for functional safety in the automotive world (ISO 26262:2018).

ASIL has since become used as a mantra in development, but with its purpose being misunderstood, in my opinion. ASIL was and is assistance for combatting the potentially harmful effects of technical defects in an appropriate manner. Or simply: Minimising possible hazards to an acceptable risk. What is deemed “acceptable” is subjective and not specified in the standard. It is therefore the responsibility of each manufacturer themselves to define what they deem acceptable.

In this blog we will use battery cells of an electric vehicle as an example.

Example: the battery cells should supply power for the lighting of the interior. If they fail to do this, then the passenger area remains dark. This does not put the health of the occupants at risk, however.

This is what is referred to as an acceptable risk; or an ASIL QM – a level that only relates to the fulfilment of quality requirements. Accordingly, we need not concern ourselves with particular functions that prevent the event “no power supply to the interior lighting”.

Example: on the other hand, if the power supply to the headlamps illuminating the road fails during a night-time journey, we can anticipate something very serious happening, and we would assign this case the highest ASIL value: namely ASIL D (the assignment is described in the next section). We therefore develop functions that prevent the headlamps from going out.

That there is the explanation of what ASIL actually means. ASIL is a classification of functions (safety functions to be precise). This classification indicates how reliable the function against failure is. The higher the value, the more attention must be paid that the function cannot fail (the values in ascending order are QM, A, B, C and D).

The standard divides a development into the concept phase (ISO 26262 part 3), the system (ISO 26262 part 4), hardware (ISO 26262 part 5) and software (ISO 26262 part 6). And depending on the stage of development, there are corresponding specifications as to which analyses have to be performed to demonstrate that the function satisfies a particular ASIL. The higher the ASIL, the more requirements need to be met and analyses performed.

Here the aim is always to reduce the possible risk of harm to health or fatality to a reasonable or acceptable level.

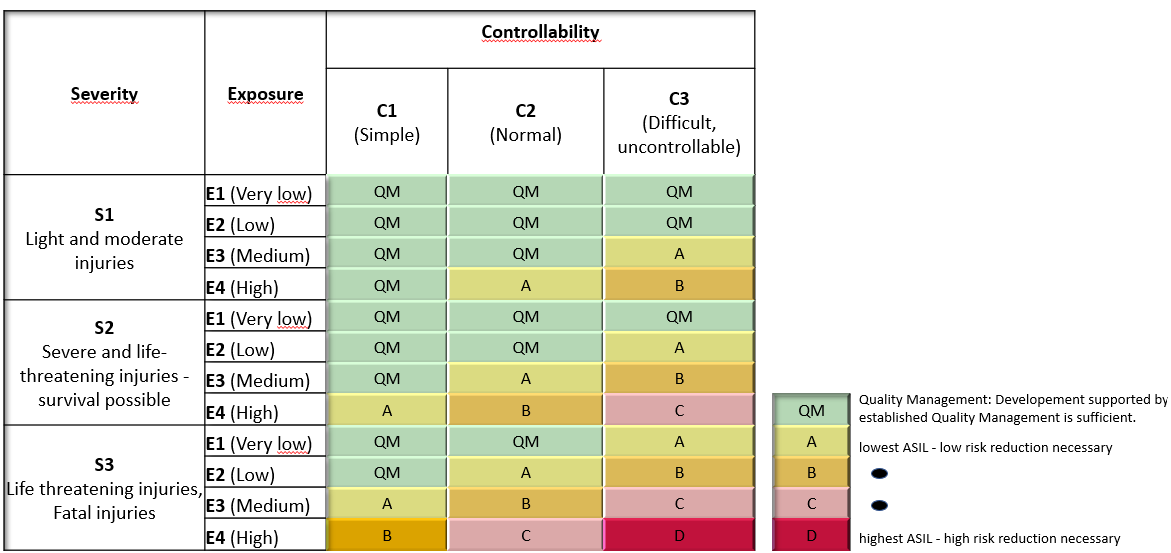

This is determined by means of a risk analysis at the start of each project. Possible scenarios are analysed and such things are determined as how big the effects are (severity), how often the event occurs (exposure) and how well the driver can control the situation (controllability).

If the severity of damage is high, the probability of occurrence is high and the driver cannot control the situation, this is considered a high risk. By contrast, rare events with minor damage are evaluated as low risk.

The higher the risk, the better the safety function should be; and therefore meet the requirements of a higher ASIL.

At the beginning in particular, one has little experience and awareness as to which values one should use here. But there is literature to help you. You can find suggestions for the severity of damage, for example, in the “Official Journal of the European Union L73/121” in the last section “Severity of injury”. Or for the probability of occurrence there is the situation catalogue VDA 702, which lists the e-parameters from ISO 26262-3 in table format.

As explained previously, the aim is to minimise the risk of dangerous events to an acceptable level. And, accordingly, it is worth considering what minimises this risk starting with the architecture.

Example: A safe condition for the battery cells is that these cells do not emit gases or explode. In other words: the risk of a cell exploding or emitting gases should be low, meaning acceptable.

A natural first step would be to design the system in such a way that a dangerous situation can never occur. You would therefore not require a safety function, as the risk has been eliminated already through the design.

Example: for the battery cells, this would equate to a cell chemistry that never reacts thermally.

If this is not feasible, then you define safety functions that monitor a dangerous part of the system and can independently trigger a safe condition. To do this, you investigate the conditions that can lead to this and that can be prevented in a controlled fashion.

Example: if you examine battery cells, you get a sensitivity to overcurrent, overvoltage or excess temperature. The corresponding safety functions therefore monitor the current, the voltage and the temperature and, in the event of a determined limit value being exceeded, the cells are disconnected from the on-board power supply.

We thus try to create an architecture that identifies potential faults and can respond to them correctly.

You can be satisfied the functions are adequate when you have an argument ready for all risks proving that the risk has been reduced to an acceptable degree.

The quality of the safety functions depends among other things on the quality of the components used. Hardware components with a low probability of failure are often more expensive, however.

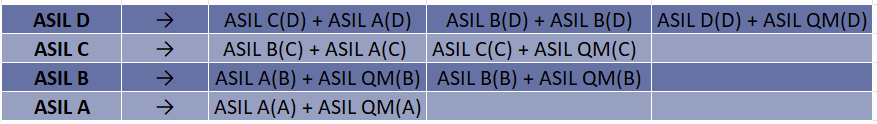

ISO 26262 offers the possibility to break this down. This means splitting the function up into several simpler functions.

Lighting is a good example for this: the illumination of the road (headlamps) failing during a night-time journey would be critical. The forerunner to the familiar 2CV, the TPV, was only equipped with one headlamp, for example, because this complied with French law at the time. If its one headlamp failed, then it would be dark; i.e. critical.

For this reason, two headlamps were subsequently included. If one of the two fails, then the driver can reach home safely with the one remaining and arrange for repairs. This is what is referred to as “redundancy”.

As a result, the reliability of the two headlamps does not need to be as high as that of the single one.

This means that instead of an expensive ASIL D component, you can use two ASIL (B)D components, which are usually significantly cheaper. The parentheses here indicate that “decomposition” has occurred and what the higher-level was. ASIL A(C) means the function itself must meet ASIL A, even though it is part of an ASIL C function. The necessary analyses for ASIL C are applied at the overlying level. The subfunction with ASIL A(C) only need concern itself with ASIL A.

The next image shows how you can break this down:

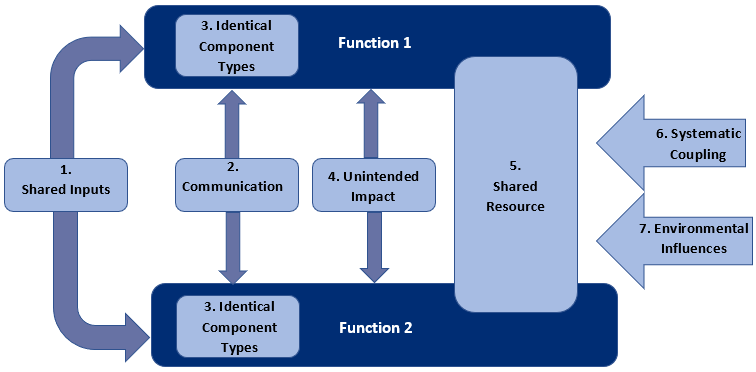

What is important here, however, is the independence of the two newly created functions. If an incident were able to turn off both simultaneously, the decomposition would make no sense.

Independence does not mean that

This relates both to hardware and software.

Yet it is not just faults that are the subject of independence. The standard also refers to “freedom from interference”. This specifically concerns possible attacks of other functions on the safety function. An illustrative example of this is a jointly used memory. The safety function saves its monitoring values there. The radio overwrites this information with the titles of the next songs. As a result, the safety function then evaluates the condition based on incorrect data, and is subsequently no longer reliable. The conclusion from this is that it is imperative that memories for safety functions are kept separate.

Possible dependencies are identified through analyses. We try to explore which initiator triggers dependent faults and how we can respond to them (through changes in architecture, wiring diagrams or software code, or through safety mechanisms that interrupt the sequence of faults or prevent this entirely). To this end, we try to analyse everything systematically:

Accordingly for software, attention is paid during analyses to jointly used memories, possible bottlenecks in resources, temporal and logical links and which components receive which information and when. In addition to jointly used memory as mentioned above, deadlocks and livelocks can be cited as examples of typical software problems. A situation has been permitted during implementation in which the function gets stuck and can no longer react.

For hardware, the wiring diagrams are analysed, and possible initiators are identified by means of techniques like FTA. A focus here is on common voltage sources, for example. Generally speaking, everything that has an impact on several safety functions is analysed. If a power supply can paralyse several functions simultaneously, an incorrect clock will incorrectly time several monitoring actions, so that critical situations are not detected, or wholly fundamentally – if identical components are used which fail simultaneously due to a common weakness because, for example, the temperature is too high?

The aim here is to discover dependencies and to implement appropriate countermeasures as early as the development stage.

The word ASIL leaves many developers trembling, because many associate it with lots of paperwork. An ASIL evaluation and the development upon which it is based should simplify proving that you have identified and mitigated problems in a structured manner.

One should not be awestruck or obsessed with the minutiae of the standard, but rather follow the logical path for fault detection and develop suitable functions for safety.

In the event of such uncertainty, Lorit Consultancy is ready to provide support and help you using experience gained from many projects.

By Gerrit Steinöcker, Functional Safety Consultant

Do you want to learn more about the implementation of ISO 26262, IEC 60601 or any other standard in the Automotive or Medical Device sector? Please contact us at info@lorit-consultancy.com for bespoke consultancy or join one of our upcoming online courses.